Learning how the brain learns

Humans are exquisitely able to sort things that we see into categories: houses, trees, grass, people, faces, etc. When our visual categorization abilities are confused, by optical illusions for example, it can be unsettling (painting by Oleg Shuplyak)

Whether it's an infant figuring out why a dog isn't a cat, or a retiree picking up the rules of baseball for the first time, humans have an unparalleled capacity for learning. The vast majority of our behaviors, both conscious and unconscious, are guided by our ability to store meaningful experiences in memory and recall them when needed.

As complex as it may seem, scientists are steadily making progress in unraveling how the brain accomplishes this feat. For University of Chicago neuroscientist David Freedman, PhD, associate professor of neurobiology, the key to better understanding the brain's ability to learn has been to focus on a specific cognitive function where learning, memory and decision making all intersect – visual categorization.

Human and primate brains are remarkably adept at sorting objects that we see into categories. With a quick glance, we can distinguish cats from cars, hamburgers from rocks, people from road signs, and we can do it all without much effort. The ability to categorize and assign meaning to the tremendous amount of information our eyes take in is essential for our daily lives and impacts nearly every decision we make.

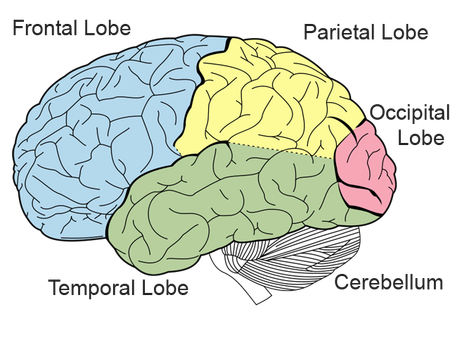

In previous studies, Freedman and his colleagues identified regions of the brain, and even specific neural circuits, which are responsible for visual categorization. They found the parietal and prefrontal cortices – brain areas closely tied to higher cognitive functions – house populations of neurons that encode visual category information. The activity of individual neurons in these areas can remarkably be used to predict a subject's categorical decisions.

However, scientists didn't know what happens during the process of learning new categories, or how learning affects neural circuits in these brain areas. So Freedman and his team, led by graduate student Arup Sarma in collaboration with postdoctoral researcher Nicolas Masse, devised a series of experiments in which they could measure and compare the activity of the brain before, during and after it learned new visual categories.

A game of brains

In a study published in the January 2016 issue of Nature Neuroscience, Freedman's team trained monkeys to play a simple visual recognition game. The monkeys would hold a lever while observing a visual pattern moving in a certain direction across a computer screen. After a short blank delay, a second moving pattern would appear. If this mirrored the direction of the first pattern, the subjects had to let go of the lever to receive a tasty reward. If they were wrong, no reward.

The team then created a second game involving visual categorization. The same visual patterns would move across the screen as before, followed by a delay. But this time, instead of indicating whether patterns matched each other exactly, the animals had to assign the patterns into one of two broad categories which they learned over the course of many sessions of practice.

"In order to solve a task like this, the subjects have to learn two entirely new visual categories," Freedman said. "They have to be able to remember the first pattern, remember the categories, recognize if the second pattern matches and respond appropriately by letting go of the lever. By recording from neurons as they play these games, we can look at exactly what happens in the brain as new categories are learned."

The researchers focused on measuring the activity of neurons from the two brain areas known to be involved in visual categorization, the prefrontal cortex and the posterior parietal cortex (specifically, neurons in the lateral intraparietal area).

Throughout the course of both tasks, prefrontal cortex neurons displayed activity indicating they were involved in both the processes of recognizing the patterns as well as storing them in short term memory. This memory-related activity was evident by neurons remaining active during the delay period between patterns.

However, neurons in the posterior parietal cortex displayed an unexpected pattern of activity. In the first task (before learning the categories), these neurons very accurately encoded information about the motion of the dots. But they would be quiet during the delay period, showing that they did not participate in the process of storing the patterns in short term memory. After the subjects mastered the second task (and learned the new categories), these neurons became active throughout all phases of the task, including the delay.

"The act of learning these new categories completely changed the neural activity we saw during the delay period, when subjects have to remember information about the first pattern," Freedman said. "It caused this one brain area, the posterior parietal cortex, to become directly engaged in short-term memory, when before it wasn't. This was a big surprise."

Previous studies have shown that the prefrontal and parietal cortices function quite similarly during visual categorization tasks, despite being different lobes separated by a great distance (for the brain). But the results of these new experiments now present an unambiguous difference between the two-posterior parietal cortex neurons become involved in short-term memory when visual categorization, but not a simpler task like discrimination, is involved.

"It suggests that there's something special about categorization learning that changes the function of the parietal cortex, and causes it to be more engaged in this task compared to other simple memory tasks," Freedman said.

Although it's still unclear why parietal cortex neurons behave this way, Freedman and his team now have compelling evidence that these brain areas perform not only independent operations, but also coordinate their activity for visual categorization. They hypothesize the parietal cortex may play a leading role in learning visual categories, and are now designing experiments to test their ideas.

"It's an important clue for building experiments going forward, to understand what is it that engages a particular brain area in a certain task or a certain function," Freedman said. "This opens the door to really promising and interesting lines of work."

##

The study, "Task-specific versus generalized mnemonic representations in parietal and prefrontal cortices," was supported by the National Institutes of Health, a McKnight Scholar award, the Alfred P. Sloan Foundation, a National Science Foundation CAREER award and the Brain Research Foundation. Additional authors include Nicolas Y Masse from the University of Chicago and Xiao-Jing Wang from New York University.