Window to the brain

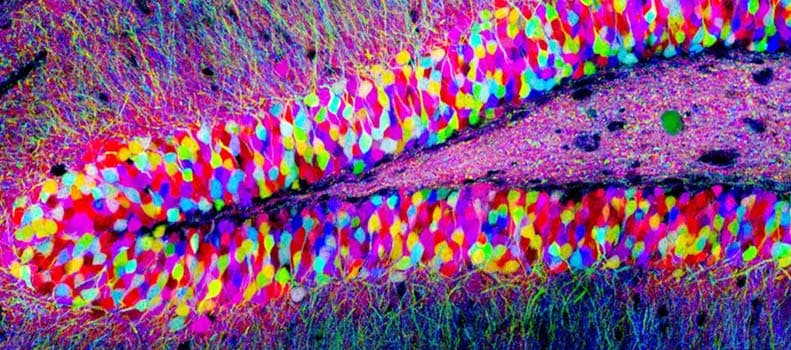

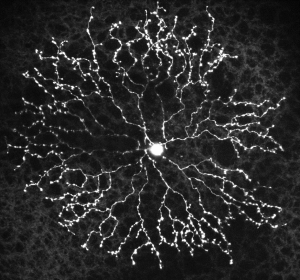

Individual neurons in the brain can be distinguished from neighboring neurons using fluorescent proteins in this "Brainbow" (Lichtman et al).

For the past 30 some odd years, William Newsome, PhD, Director of the Neurosciences Institute at Stanford University, has been working to understand biology behind the brain's most complex functions, such as visual perception and decision-making. How? By studying the brain, one neuron at a time. At first blush, this may seem a Sisyphean task (roughly analogous to reverse engineering a supercomputer one transistor at a time). But it is in fact a remarkably fruitful approach, yielding numerous fundamental insights into the innermost workings of our brains.

Professor William Newsome will speak about the neuroscience of perception and decision making at the first Mind and Brain Annual Public Lecture on Monday May 16, 2016

This Monday, May 16, Newsome will speak about this particular branch of neuroscience - the good, the bad, and the ugly - and look at what the future holds for the study the brain at the inaugural Mind and Brain Annual Public Lecture hosted by the Grossman Institute for Neuroscience, Quantitative Biology and Human Behavior at the University of Chicago. The lecture series is free and open to the pubic, and runs from 4 to 6 p.m. at the Chicago Cultural Center.

Newsome's work is certainly not foreign to neuroscientists at University of Chicago, many of whom are right in the thick of this field of study. In a story that will be appearing in the Spring issue of Medicine on the Midway, we take a look at the work of two of UChicago's brightest young neuroscientists. Like Newsome, they use the visual system as a window to the brain. By understanding the activity of individual neurons and neural circuits involved in vision, they aim to unlock the biology of not just how we see, but how we think, how we remember, how we learn, how we make decisions and more.

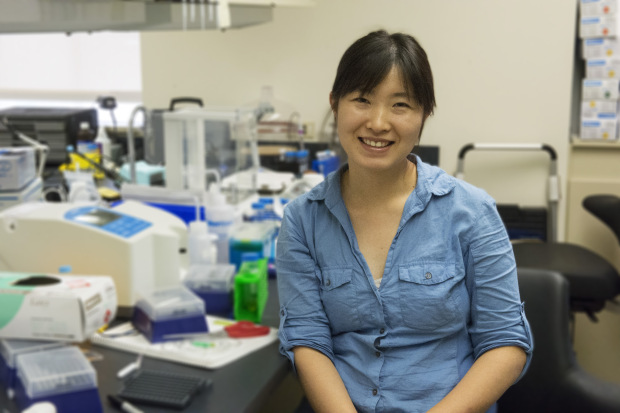

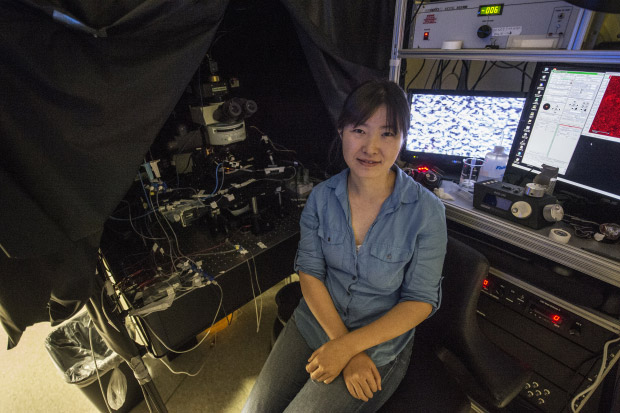

Wei Wei, PhD, in her Abbott Memorial Hall lab at the University of Chicago. (Robert Kozloff)

Window to the brain

When isolated from the eye, the retina looks like any other tiny piece of nondescript tissue. What it does not look like is a fully functional biological computer. But you know what they say about appearances.

One such retina, taken from a genetically engineered mouse, is inside a small glass chamber, illuminated only by infrared light in a darkened laboratory room. It rests beneath a custom-built microscope decorated with a byzantine array of cameras, pumps, mirrors and wires, like the neuroscience equivalent of something out of a Mad Max movie.

A Microscope in action in Wei's lab.

"We supply the retina with oxygen and nutrients so that it's still alive and can respond to visual stimuli," said neurobiologist Wei Wei, PhD. "It can 'see' as if it was still in the eye."

With the flick of a dial, she projects an image about the size of a grain of salt onto the retina. A small group of photo-sensitive neurons fire in response, triggering a cascade of complex neural activity. Instead of traveling to a brain to be processed as sight, however, these signals are recorded by electrodes and stored on hard drives.

What these neurons "see" will be analyzed by a team of human brains, and studied and pondered on by many more - all working to understand how our own brains work. The visual system is the brain's window to the world. But for neuroscientists at the University of Chicago and around the world, it is the ideal window to the brain.

Around half of the human cortex, the brain layer responsible for higher cognitive functions, is directly or indirectly involved in processing vision. No sense demands as much cranial real estate, or contributes as much to how we interact with the world around us. By deconstructing the neurobiology of vision, researchers hope to reveal the innermost workings of every part of this enigmatic organ - not just how we see, but how we think, how we remember, how we make decisions, and more.

Soft spoken and laser sharp, Wei, an assistant professor in the Department of Neurobiology, is one of a number of highly accomplished investigators studying the brain by way of the visual system. She's also remarkably young for her position, partially due to starting a prestigious university-level program at only age 14 in China. As a graduate student, she delved into the fundamental building block of neural signaling - the synapse, or point of connection between two individual neurons. But as she pursued postdoctoral studies and launched her own lab, her interests expanded toward neural networks and the retina.

"Many people intuitively think of the retina as a camera, just capturing videos of the visual world and relaying it to the brain," Wei said. "But it's not. It's much more sophisticated. The retina is more like a little computer that starts processing visual inputs into multiple streams of information, long before any signals reach the brain."

Wei Wei, PhD, in her Abbott Memorial Hall lab at the University of Chicago. (Robert Kozloff)

Whether it travels light years from the stars or nanoseconds from a light bulb, every photon we see ends its journey at the retina, a thin layer of tissue in the back of the eye and the only visible part of the brain. Networks of neurons here calculate information about motion, color, direction, light intensity and more, while ignoring irrelevant features. The combination of around 20 to 40 different types of these neural circuits, each responding very differently to the same photons, is what the brain ultimately uses to construct vision. As the rodent retina under the microscope in her lab performs its computations, Wei and her team are most interested in the activity of one particular cell class, the retinal ganglion cell. These are the only neurons directly wired to the rest of the brain, and the signals they send down the optic nerve depend entirely on what they "see."

A starburst amacrine cell, a class of interneuron in the retina

In this case, the researchers are focused on ganglion cells that respond only to specific directions of motion. As the projector shines an image of a tiny black bar moving from left to right on their receptive field, these cells fire enthusiastically. When the bar moves from right to left, they remain silent.

This selectivity is possible because each ganglion cell receives signals from dozens of intermediary neurons, which in turn are processing the spatial and timing activity of 100 or more photoreceptors. Like resistors or conductors in an electrical circuit, intermediary neurons organize the flow of information by increasing or decreasing the activity of other neurons in its network. Their combined effect is how a ganglion cell "sees" motion.

Wei wants to understand and reverse engineer this circuit. To do so, she breaks parts of it. Leveraging a powerful combination of molecular, genetic and imaging techniques, her lab can manipulate its activity in extremely precise ways. For this experiment, her team uses a genetic "switch" to turn off only synapses that transmit an activity-decreasing signal. They then ask: if the ganglion cell doesn't receive this information, will it still be selective to direction?

The grossly oversimplified answer is, surprisingly, yes. Contrary to assumptions, these neurons retain a great deal of their direction selectivity even in the absence of half the input they normally receive.

"Our work has shown that there must be multiple neural mechanisms that interact synergistically to ensure robust detection of motion, and are used together to foolproof the system." said Wei, who published the results of this study in the Journal of Neuroscience last fall. "If you disrupt any one particular mechanism, even one we thought was absolutely critical, there are others that can compute motion direction, albeit suboptimally."

View from on high

Many more studies are needed to answer the numerous questions that Wei's findings raise, not least of which is how these circuits continue to compute. But the implications impact much more than just vision. Regardless of location or function, neural circuits work in largely the same way: they receive some input, send signals to increase or decrease each other's activity, and collectively generate an output. Multiple mechanisms built into this process could perhaps be one of the reasons why the brain can be so dependably relied upon to carry out all its complex functions.

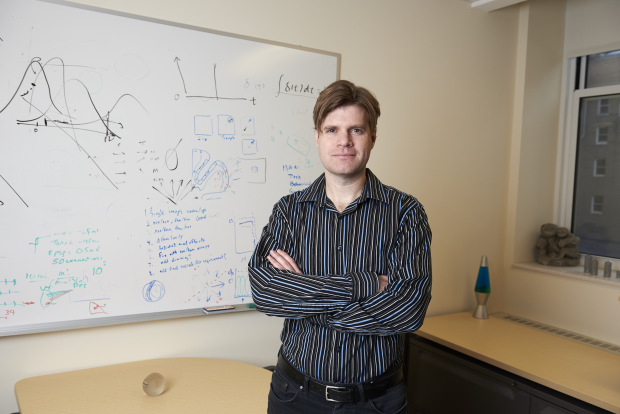

David Freedman, PhD, pictured in his office at the University of Chicago. (Joel Wintermantle)

"We have this amazing ability to think, to reason, to make sense of things in our environment," said David Freedman, PhD, associate professor in the Department of Neurobiology. "The brain is able to take in sensory information and produce meaningful experiences and behavior. We think there is a general set of rules for how this information flows through the brain, and identifying these rules at the circuit level is one of the greatest challenges in science."

Whatever the stereotype of a neuroscientist might be, it's unlikely that Freedman fits it. When he moonlights with his funk and soul-jazz band (co-founded by fellow UChicago neuroscientist Sliman Bensmaia, PhD) at various bars and venues across Chicago, few in the audience would probably guess that the lead guitarist with the dark blond locks is also chair of a computational neuroscience graduate program.

For Freedman, the visual system is a window to our higher cognitive functions. His research focuses on a particular phenomenon known as visual categorization. Human and primate brains are remarkably adept at sorting objects we see into categories. With a quick glance, we can distinguish cats from cars, hamburgers from rocks, people from road signs, and we can do it all without much effort. The ability to categorize and assign meaning to everything we see is essential for our daily lives. By describing the neural basis for this ability, Freedman hopes to shed light on all the processes it involves - learning, attention, memory, perception and decision making.

To do so, he utilizes a creative and sophisticated blend of classic and innovative modern techniques - not so dissimilar to his music. The concept is relatively straightforward: ask an animal to play a simple video game and look at what's happening in its brain. From his early days as a graduate student at MIT, to his postdoctoral studies at Harvard and his own lab at UChicago, Freedman has been a pioneer in this approach. The game looks roughly like this: a monkey holds a lever while observing a simple moving pattern on a computer screen. Something happens - maybe the color changes, or the direction shifts. If the animal sees this, it's trained to release the lever. It then receives a tasty snack.

A sample visual categorization task from Freedman's lab.

Through different permutations of this game, Freedman and his team can ask animals to pay attention to certain things, to report perceptual changes, to demonstrate learning by sorting patterns into different categories, to store things in visual memory, and much more. At the same time, the researchers record the activity of up to 100 neurons at a time, often in multiple brain locations, to study individual neurons engaged in these higher cognitive functions.

His efforts have been remarkably fruitful. Freedman and his colleagues identified specific neurons in the prefrontal and parietal cortexes that are possibly the first step in turning abstract visual data from the eyes into categories. These neurons not only encode information about category, the activity of certain individual neurons can even be used to predict what category an animal will place a visual item in before it ever reports its decision.

His work revealed other neurons and their precise roles - some simultaneously carry category and spatial information, some adjust their response depending on what a subject is paying attention to, and more. He has also shed light on how these different brain areas might work together. In a study published in Nature Neuroscience in early 2016, his team showed how specific neurons in the parietal cortex become involved in short-term memory only for visual categorization, and not simpler tasks.

"If you look at, say, a car moving down the street, you not only recognize what it is, but you can tell its location, where it's moving and how fast it's going," Freedman said. "We've learned a lot about the neurons that encode individual features in a scene like this. But what we know almost nothing about is how different parts of the brain integrate that information. That's where we're focusing our research now, and where hope we'll make the biggest impact on our understanding of the rules that govern the functioning of the brain."

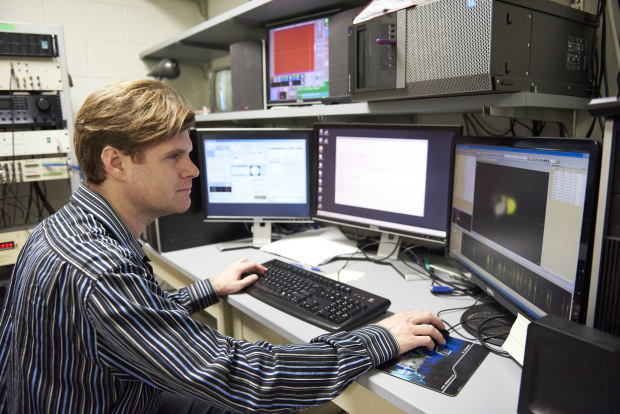

David Freedman, PhD, pictured in his lab at the University of Chicago. (Joel Wintermantle)

The challenge faced by neuroscientists like Freedman and Wei is hard to overstate. The human brain is famously the most complex known structure in the universe, containing some 100 billion neurons that form 100 trillion connections between them.

Deconstructing how visual neural circuits work, for simple computations in the eye and for complex ones in the brain, is like reverse engineering the GPU of a supercomputer - extraordinarily complicated, and still just one part of a much more complicated whole. But it's also hard to overstate the rewards if they are successful. This collection of cells forms a unified mind; one that has relationships, creates art, music and space stations, feels joy or sadness. Their misfiring results in disorders, from autism to depression to Alzheimer's.

"If we can truly understand how the brain works, it will be one of the most remarkable things for us as a species to have accomplished," Freedman said. "We can fix it when things go wrong, we can use that knowledge for technological applications that we haven't even dreamed of, and who knows, maybe we can even improve our ability to make decisions. It's the hardest question I can think of, and it's also the most interesting. It will take a long time, but it's something I think we will be able to answer."